L1 and L2 Regularization

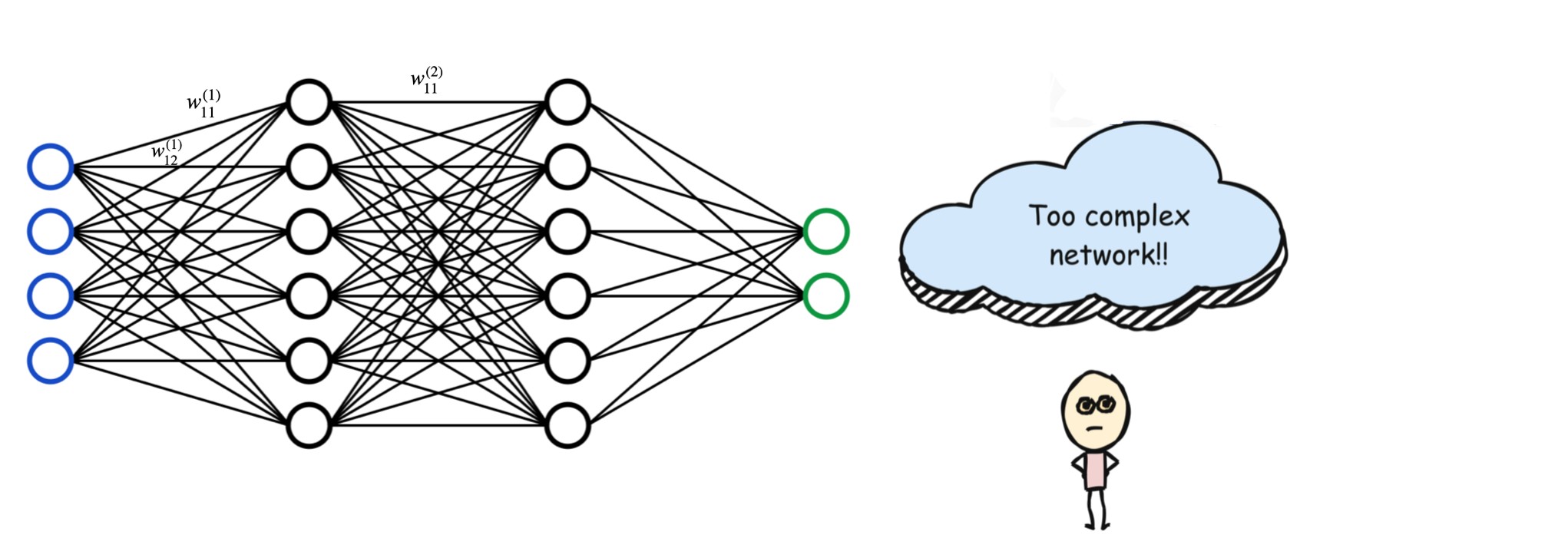

Deep learning models are extremely powerful because they can approximate highly complex functions. However, this power often comes with a downside: overfitting. When a neural network has too many parameters, it may learn the noise in the training data instead of the true underlying patterns.

The goal of regularization is to simplify such networks by controlling the magnitude of their weights and improving generalization.

Why Regularization Is Necessary

A neural network consists of layers of neurons connected by weights. These weights determine how much influence one neuron has on another. A complex network can have:

- Many neurons

- Dense interconnections

- A large number of weight parameters

When this happens, the model can fit the training data extremely well but fail to perform on unseen data.

Regularization addresses this problem by penalizing large weights, effectively encouraging the model to learn simpler and more robust representations.

.jpg)

Regularized Optimization: The Big Picture

In standard deep learning optimization, we aim to minimize a loss function. If our Loss/Error function is or :

Regularization modifies this objective by adding a penalty term:

The two most common regularization techniques are L1 regularization and L2 regularization.

L1 Regularization (Lasso)

L1 regularization adds the L1 norm of the weight vector to the loss function:

Usual Optimization:

L1 regularized Optimization:

where:

Here:

- represents the network weights

- is the regularization parameter

- is the number of weight parameters

Gradient Descent Update Rule (L1)

With L1 regularization, the gradient descent update rule becomes:

This update introduces a constant force that pushes weights toward zero.

The most important property of L1 regularization is that it drives many weights exactly to zero. This effectively removes unnecessary connections in the network.

As highlighted in the comparison tables in the PDF (pages 6–7):

- L1 regularization produces sparse models

- It performs implicit feature selection

- It is most effective when many features are irrelevant or redundant

L2 Regularization (Ridge)

L2 regularization adds the squared L2 norm of the weights to the loss function.

Usual Optimization:

L2 Regularized Optimization:

where:

Gradient Descent Update Rule (L2):

With L2 regularization, the weight update rule is:

Unlike L1 regularization, L2 regularization shrinks weights smoothly toward zero but never makes them exactly zero.

This results in:

- Stable learning

- Distributed importance across features

- Dense but well-controlled models

L2 regularization works best when most features contribute a little rather than a few features dominating the prediction.

The Role of the Regularization Parameter

The regularization parameter controls the strength of the penalty term.

When Is Too Small

- The regularization term becomes negligible

- The model behaves nearly like no regularization

- Might result in overfitting - High training accuracy but poor generalization.

When Is Too Large

- The regularization term dominates the loss

- The model focuses too much on shrinking weights

- Underfitting may occur

Thus, choosing involves balancing bias and variance.

Combining L1 and L2: Elastic Net

Limitations of L1 Regularization

- Encourages sparsity by pushing weights exactly to zero

- Can be unstable when features are highly correlated

- May arbitrarily eliminate useful features

Limitations of L2 Regularization

- Shrinks weights smoothly but never removes them

- Does not perform feature selection

- Keeps all features active, even weak ones

Elastic Net addresses these issues by blending both penalties into a single objective. It augments the loss function with both L1 and L2 regularization terms:

where:

- is the original loss function

- is the L1 norm

- is the squared L2 norm

- controls sparsity

- controls weight shrinkage

Intuition Behind Elastic Net

Elastic Net applies two simultaneous forces during training:

- The L1 term pushes small and unimportant weights exactly to zero

- The L2 term prevents remaining weights from becoming too large

As a result, Elastic Net:

- Produces sparse yet stable models

- Handles correlated features better than L1 alone

- Improves generalization performance

The combined effect ensures that irrelevant connections are removed while important ones remain controlled.

When to Use Elastic Net

Elastic Net is particularly effective when:

- The dataset contains many features

- Input features are highly correlated

- Feature selection and stability are both desired

- Pure L1 or pure L2 regularization performs poorly

Key Takeaways

- Overfitting arises from overly complex neural networks

- L1 regularization simplifies models by removing unnecessary weights

- L2 regularization stabilizes learning by shrinking weights

- The regularization parameter must be tuned carefully

- Combining L1 and L2 often yields the best performance

Conclusion

Regularization is a fundamental concept in deep learning. It ensures that models not only perform well on training data but also generalize effectively to unseen examples. By understanding and applying L1 and L2 regularization correctly, we can build neural networks that are both powerful and reliable.

In practice, a well-regularized model is often more valuable than a highly complex one.